Goodbye Dokploy: The Homelab Grew Up

Remember when this website lived in a Dokploy LXC container on my Proxmox mini-PC? Those were simpler times. Peaceful times. Times when "infrastructure" meant "one LXC with some Docker containers on it." Proxmox was already the hypervisor on that same box, running VMs and LXCs for other things. I just hadn't committed to doing it *properly* yet. Then I got ideas. Then I replaced the Dokploy LXC with a proper Kubernetes cluster, a GitHub runner, and enough automation to make SkyNet jealous. Now everything on that same mini-PC is wired together with Terraform, Ansible, scripts, and auto-updates. Send help.

What Happened #

The old setup was fine. Dokploy in an LXC on Proxmox, Docker containers, reverse proxy, SSL. It worked for the website. But the rest of the homelab was already living on the same Proxmox host — VMs for this, LXCs for that, manually maintained, hand-tweaked, slightly chaotic. The website deserved better than a monolithic LXC. I wanted to bring everything up to the same level:

- Infrastructure as Code so Proxmox guests are defined in Terraform, not clicked into existence

- A Kubernetes cluster running on Proxmox VMs, because container orchestration beats

docker-composein a single LXC - GitOps with Argo CD so cluster state lives in git and deploys itself

- A proper GitHub runner for CI/CD that actually runs inside the cluster

- Observability because if you can’t graph it, did it even happen?

- Single Sign-On because typing passwords is for peasants

- Auto-updates so security patches happen without me remembering to log in

So I deleted the Dokploy LXC and started building proper VMs on the same Proxmox host. Same mini-PC, entirely different philosophy.

The New Architecture #

Proxmox: It Was Always There #

Proxmox has been the hypervisor backbone for ages. The change was treating it like proper infrastructure instead of a VM vending machine I manually operated. Now guests are provisioned through Terraform and configured through Ansible:

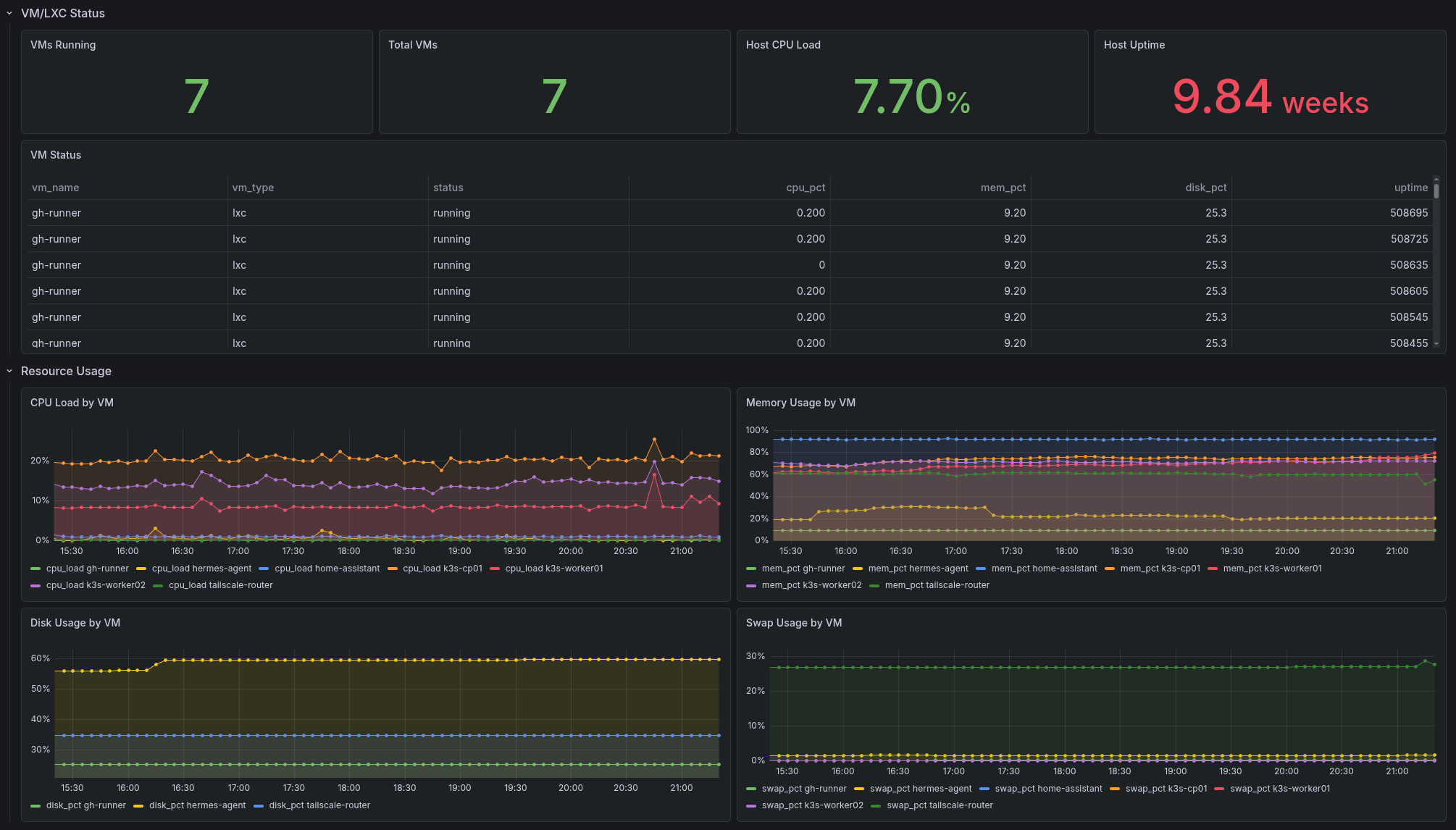

| Guest | Type | Purpose |

|---|---|---|

k3s-cp01 | VM | K3s control plane |

k3s-worker01 | VM | K3s worker (the beefy one) |

k3s-worker02 | VM | K3s worker |

tailscale-router | LXC | Tailscale exit node |

gitlab-runner | LXC | GitHub CI/CD runner |

Home Assistant stays as a protected VM because some things are too sacred to terraform.

The Proxmox dashboard tracks every VM and LXC: who’s running, resource usage, disk consumption, swap pressure, and uptime. At a glance I can see if something’s misbehaving before it becomes a 2 AM problem.

Terraform: Thou Shalt Provision #

Guest creation is handled by Terraform with the bpg/proxmox provider. I define a VM or LXC in HCL, run terraform apply, and Proxmox spawns it from a Debian cloud image. No more clicking through web interfaces. The machines are cattle, not pets.

Ansible: Thou Shalt Configure (and Update) #

Once Terraform births the VMs, Ansible takes over. Playbooks handle:

- Base OS configuration, security hardening, time sync

- K3s installation and upgrades

- Telegraf deployment for metrics

- Proxmox host bootstrap

- Auto-updates so unattended-upgrades keep systems patched

The inventory is structured with groups for control planes, workers, LXCs, and protected VMs. Running ansible-playbook playbooks/k3s-install.yml builds the entire cluster from scratch. It’s magical and slightly terrifying.

K3s: Kubernetes, But Light #

Three nodes. One control plane, two workers. Traefik disabled because I have opinions. Ingress-nginx with MetalLB handles routing. cert-manager talks to Domeneshop for DNS-01 challenges. Everything gets real TLS certificates.

Argo CD: The GitOps Overlord #

Argo CD watches the git repo and continuously syncs cluster state. I push a manifest, Argo CD notices, the application deploys. The argocd/apps.yml app-of-apps bootstraps everything:

- Authentik (SSO)

- External Secrets

- Grafana

- InfluxDB 3 Enterprise

- Mailu (mail server)

- NetBox

- OpenBao

- OpenTelemetry

- Teleport

If the cluster burns down, I can rebuild the entire platform from a single git push. In theory. In practice there are still a few bootstrap secrets I copy around like a digital squirrel.

GitHub Runner: Proper CI/CD #

The old GitHub Actions runner on a bare LXC is gone. Now there’s a proper GitHub runner integrated into the cluster, running pipelines that build, test, and deploy. CI/CD that actually understands Kubernetes. Revolutionary, I know.

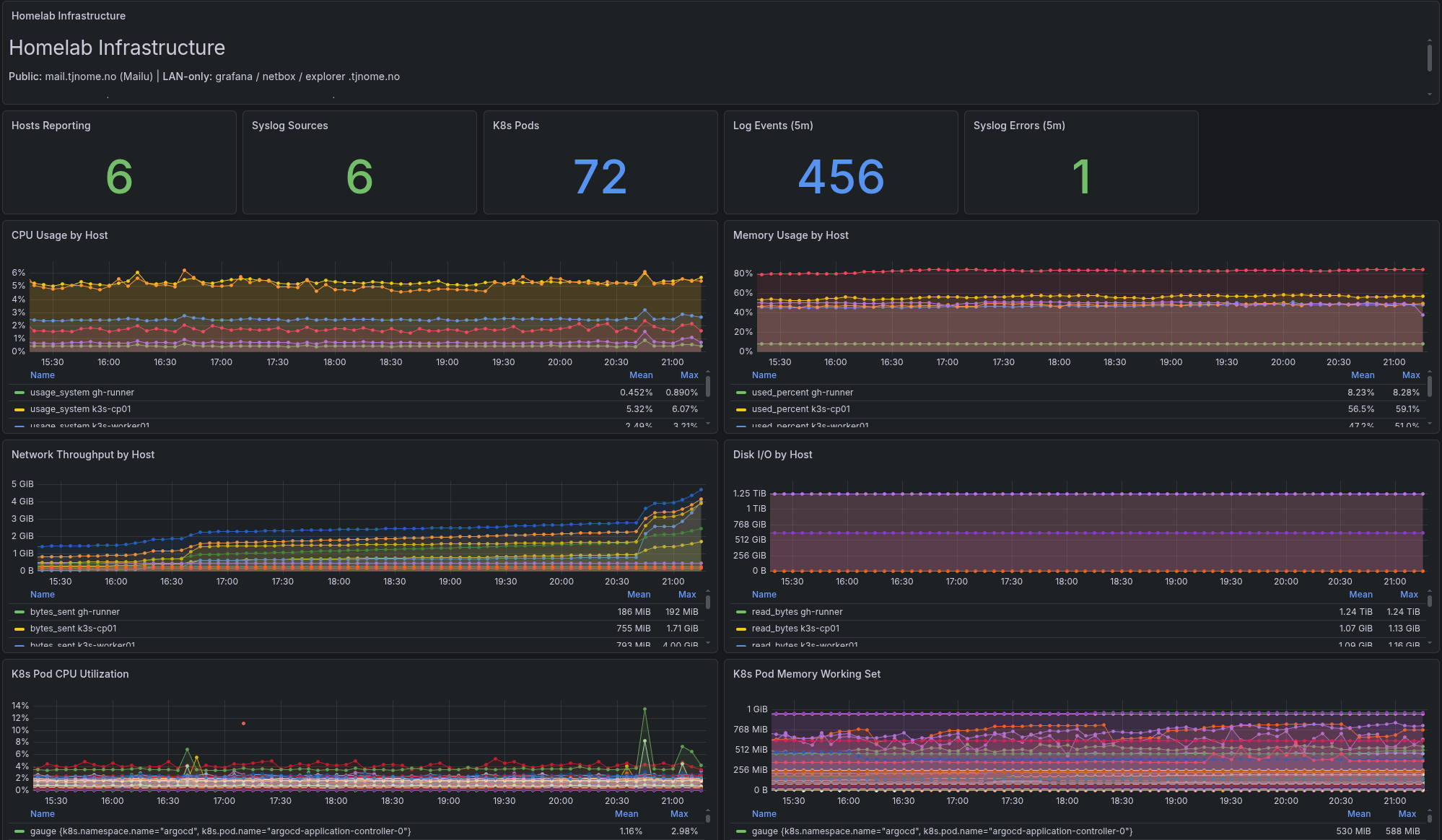

Observability: Because Graphs Are Pretty #

- InfluxDB 3 Enterprise for time-series data

- OpenTelemetry Collector DaemonSet receiving metrics, traces, and logs

- Telegraf on every host for system metrics, syslog, and Proxmox stats

- Grafana with dashboards stored in git and synced automatically

I can tell you the CPU usage of any VM, the memory pressure on a worker node, or how many mail transactions failed last Tuesday.

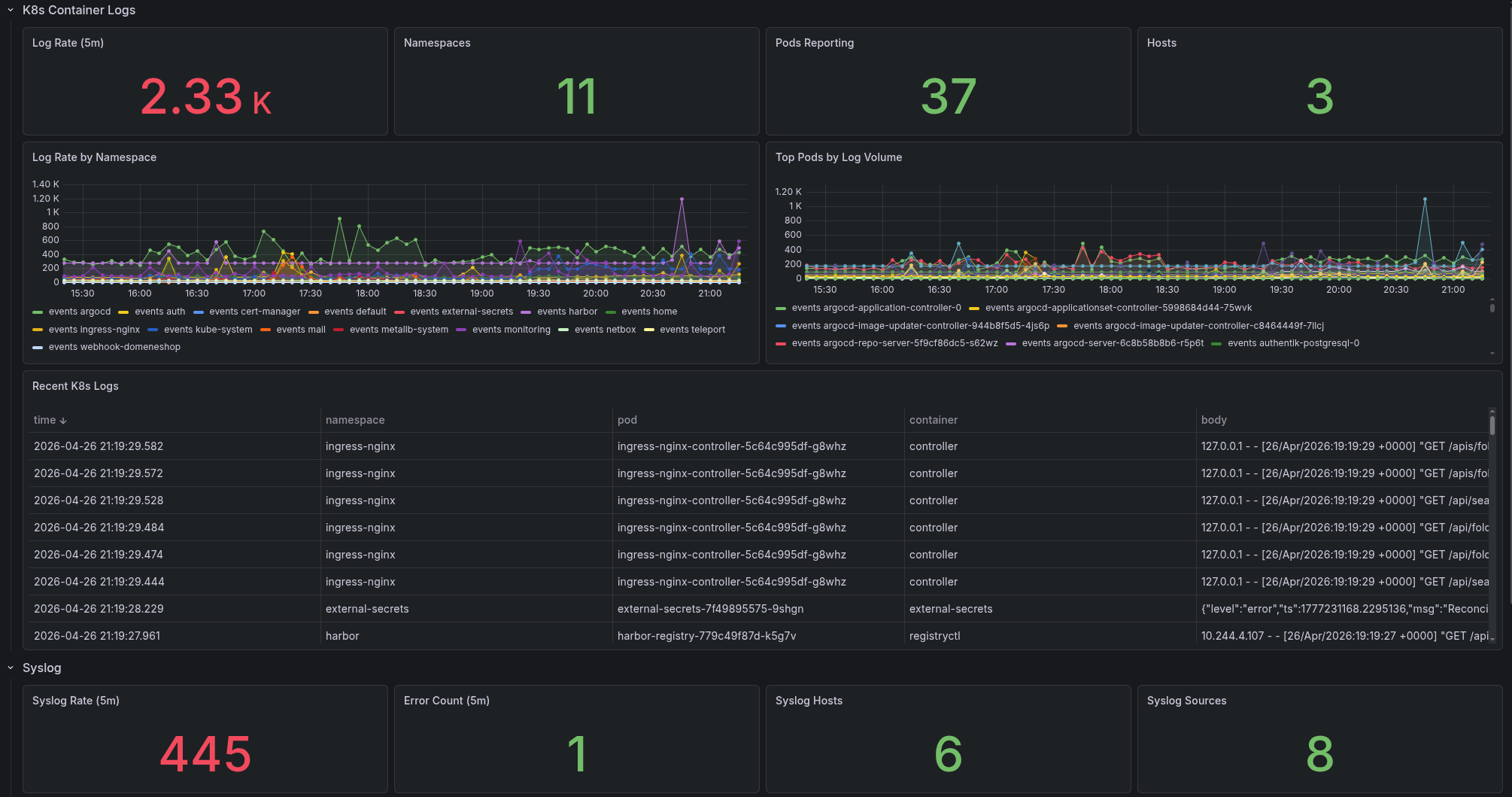

The overview dashboard shows the whole picture: hosts reporting, K8s pods, log events, CPU and memory by host, network throughput, disk I/O, and per-pod resource usage. It’s the “mission control” view I check when I want to feel like I’m running a data center. (I’m not. It’s three VMs in a mini-PC. But the graphs don’t know that.)

Log aggregation means I can dig into container logs and syslog across the entire stack from one place. No more SSHing into three different hosts to trace a request.

Authentik: One Login to Rule Them All #

Authentik provides native OIDC for Grafana, Argo CD, Harbor, and NetBox. No more separate passwords.

Mailu: Yes, I Run My Own Mail Server #

Some people skydive for adrenaline. I run a self-hosted mail server. Mailu handles SMTP, IMAP, webmail, and admin. Raw mail protocols sit on a MetalLB VIP with OPNsense port forwarding. DNS records sync via script. It works. Most of the time.

The same mini-PC that once ran Dokploy in an LXC now runs a fully automated, observable, GitOps-driven infrastructure stack. Proxmox went from “the thing I manually clicked around in” to “the center of everything.”

Somewhere between docker-compose in an LXC and a three-node Kubernetes cluster, I lost my mind. But I gained a homelab that manages itself — all on the same hardware that started this whole mess.

And when something breaks at 2 AM? At least I have graphs showing exactly when it broke.